U.S. Military vs. Out-of-Control Killer Robots

Join us and tell your reps how you feel!

During a confirmation hearing with the Senate Armed Services Committee, General Paul Selva advocated "keeping the ethical rules of war in place lest we unleash on humanity a set of robots that we don’t know how to control."

Selva, America’s second-highest ranking military officer, made his remarks in response to a question from Senator Gary Peters (D-MI). Peters asked Selva about a Department of Defense directive which requires that a human make the final call on whether to kill a human by autonomous weapons—for example, before a drone can kill a human, a human must give the ok.

The DoD directive is due to expire later this year, and Peters warned that America’s enemies wouldn’t hesitate to use the killer-robot technology.

"Our adversaries often do not consider the same moral and ethical issues that we consider each and every day," Peters told Selva.

"I don’t think it’s reasonable for us to put robots in charge of whether or not we take a life," Selva told the committee during the confirmation hearing for his reappointment as the vice chairman of the Joint Chiefs of Staff.

"There will be a raucous debate in the department about whether or not we take humans out of the decision to take lethal action," Selva said, but added that he remained in favor of “keeping that restriction.”

The General’s views conflict with those of the Navy, who seeks to pursue autonomous drones. But Selva’s assessments are largely in line with a group of entrepreneurs and scientists - including Stephen Hawking, Elon Musk and Steve Wozniak - who signed an open letter in July 2015 calling for a "ban on offensive autonomous weapons beyond meaningful human control":

"Autonomous weapons are ideal for tasks such as assassinations, destabilizing nations, subduing populations, and selectively killing a particular ethnic group. We therefore believe that a military AI arms race would not be beneficial for humanity. There are many ways in which AI can make battlefields safer for humans, especially civilians, without creating new tools for killing people.”

While Selva condemns autonomous killing technology, he still supports the Pentagon researching how to defend against it.

The ban "doesn’t mean that we don’t have to address the development of those kinds of technologies and potentially find their vulnerabilities and exploit those vulnerabilities," Selva told the committee.

The Pentagon’s stance on robots taking their own life was not discussed.

Prove you’re not a robot by sharing your human thoughts. Should the Defense Department lift its ban against autonomous killings? Should human-backed drones even be allowed in war?

Use the Take Action button to tell your reps what you think about killer robots.

— Josh Herman

(Photo Credit: ArmyTechnology.mil / Creative Commons)

The Latest

-

IT: 🖋️ Biden signs a bill approving military aid and creating hurdles TikTok, and... Should the U.S. call for a ceasefire?Welcome to Thursday, April 25th, readers near and far... Biden signed a bill that approved aid for Ukraine, Israel, and Taiwan, read more...

IT: 🖋️ Biden signs a bill approving military aid and creating hurdles TikTok, and... Should the U.S. call for a ceasefire?Welcome to Thursday, April 25th, readers near and far... Biden signed a bill that approved aid for Ukraine, Israel, and Taiwan, read more... -

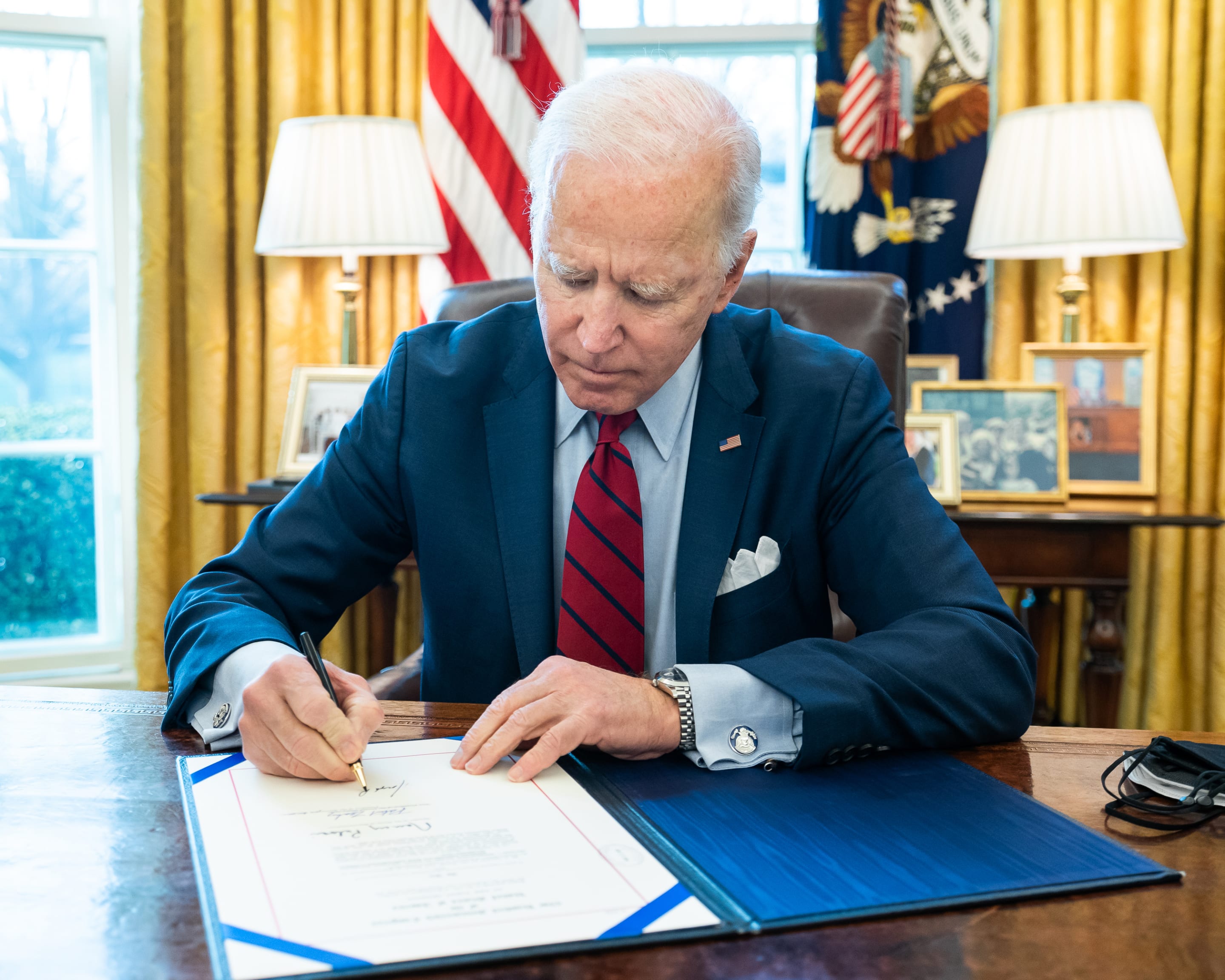

Biden Signs Ukraine, Israel, Taiwan Aid, and TikTok BillWhat’s the story? President Joe Biden signed a bill that approved aid for Ukraine, Israel, and Taiwan, which could lead to a ban read more... Taiwan

Biden Signs Ukraine, Israel, Taiwan Aid, and TikTok BillWhat’s the story? President Joe Biden signed a bill that approved aid for Ukraine, Israel, and Taiwan, which could lead to a ban read more... Taiwan -

Protests Grow Nationwide as Students Demand Divestment From IsraelUpdated Apr. 23, 2024, 11:00 a.m. EST Protests are growing on college campuses across the country, inspired by the read more... Advocacy

Protests Grow Nationwide as Students Demand Divestment From IsraelUpdated Apr. 23, 2024, 11:00 a.m. EST Protests are growing on college campuses across the country, inspired by the read more... Advocacy -

IT: Here's how you can help fight for justice in the U.S., and... 📱 Are you concerned about your tech listening to you?Welcome to Thursday, April 18th, communities... Despite being deep into the 21st century, inequity and injustice burden the U.S. read more...

IT: Here's how you can help fight for justice in the U.S., and... 📱 Are you concerned about your tech listening to you?Welcome to Thursday, April 18th, communities... Despite being deep into the 21st century, inequity and injustice burden the U.S. read more...

Climate & Consumption

Climate & Consumption

Health & Hunger

Health & Hunger

Politics & Policy

Politics & Policy

Safety & Security

Safety & Security